Grounding yourself as a programmer in the AI era

MP #22: Guiding questions for making sense of AI's impact on programmers and programming.

When I started this newsletter just three months ago, I wasn’t planning to write much about AI at all. In that short period of time, however, much more capable tools have been released. I’ve been tempted to start a second newsletter to discuss developments in the AI world, but separating Python from AI tools doesn’t reflect reality. We can argue about the degree to which things are changing, but it’s clear that the ground is shifting beneath us in the programming world. AI and Python are not separate; we can’t really discuss Python without looking at the impact new tools and resources are having on the way we interact with code.

One of the things I’ve been most curious about is how the code that AI tools generate compares to the code I’d write on my own. If AI is going to write more of our code, is it going to take us in a new direction? Or is it just going to make it easier for us to do what we’ve already been doing?

This is the first post in a 6-part series:

Part 1: When trying to make sense of any big change, a set of guiding questions is really helpful. I’ll introduce a set of guiding questions that helps us move forward, instead of just watching as everything changes around us.

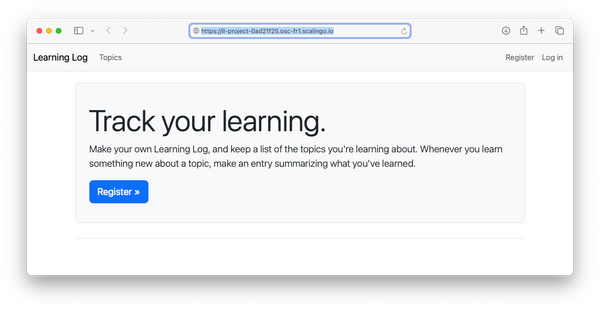

Part 2: Recently I’ve been needing to add borders to screenshots, and I was surprised to find there’s no built-in utility for doing this on macOS. I wrote a small utility in Python to do this, and it’s been really useful. In this post I’ll show how I developed the project, without any references to AI.

Part 3: The utility has been useful enough that I want to add some new features, and this is typically where I take some time to refactor my initial exploratory code. In this post I’ll refactor the project as I normally would, again without any assistance from AI tools.

Part 4: Starting with the code from Part 2, we’ll go through the refactoring process again, but with the help of an AI assistant this time. We’ll critique the resulting code from each approach, and the two workflows as well.

Part 5: This is a useful utility, so we’ll package the project up and make it available on PyPI. By the end of this post, you’ll be able to use pip to install the project.

Part 6: In the final post, we’ll consider the takeaways from this brief experiment. We’ll reconsider the guiding questions in the context of this small real-world project, and consider what this suggests for larger projects as well.

If you’re watching recent developments about AI and thinking about the impact on your work, I think you’ll appreciate this short series. If you’re tired of AI takes, I encourage you to skim each posts as it comes out. The focus will be on how effectively these tools are implementing Python code, and that will include a significant focus on technical aspects of developing and maintaining Python projects.

Guiding questions

As a teacher, I’ve always used guiding questions to focus learning. I’ve given sets of guiding questions to students, helped them craft their own, and developed questions to guide my own learning. A good set of guiding questions does exactly what it sounds like: it guides your learning and shapes your thinking in a way that helps you get where you want to be. It helps you avoid random inquiries that don’t really leave you any clearer about a given topic than you already were. It also helps you recognize when you’ve learned enough about a topic to make some decisions, and move out of a learning phase and back into a doing phase. It helps you avoid getting caught up in everyone else's new hot take, which is happening on a daily (and hourly!) basis at this point.

What follows are the kinds of questions I’ve been asking myself and others recently. These are the questions I keep in mind when reading or listening to other people’s thoughts on recent developments. I won’t try to answer all of these; anyone who claims to have clear answers to all these questions is either over confident, has access to a time machine, or is trying to sell something.

Evaluating claims about AI

What is “AI”?

What’s the distinction between artificial intelligence and artificial consciousness?

What AI tools are people using? What tools are currently being worked on?

How do these tools work?

When is it helpful to treat AI as a black box? When is it helpful to understand what’s happening “inside the box”?

AI’s impact on programming and programmers

What kinds of tasks and problems is AI most suited to?

What kinds of tasks and problems do AI tools struggle with the most? How quickly is this changing?

What does it mean for all of us if anyone with access to an AI assistant can create custom tools that solve their own problems?

We can imagine people building tools and businesses around AI-generated codebases, and asking AI tools to troubleshoot any new issues that arise. What are the boundaries of this process? When will human programmers need to be pulled into these kinds of projects? What will these programmers need to know in order to troubleshoot complex AI output?

If most people start with working programs rather than blank IDE windows, what are the new fundamentals of programming? What should people be focused on learning? What becomes less important to know and study?

What impact will AI have on open source? Many OSS maintainers and contributors enjoy working with people as much as they draw satisfaction from offering a quality product. What happens if the primary consumers of open source projects are AI assistants, rather than human programmers?

What do we need to be careful about as we start to use AI tools?

Personal questions

What’s my role in an AI-assisted world?

What should I continue learning about? What new things should I learn?

What should we tell our kids about growing up in an AI-assisted world?

What responsibilities do we, who are perhaps in the best position to understand these tools, have in helping others understand them? What role do we have in helping others adopt these tools in a helpful rather than harmful way?

What if AI tools put me out of work, and I can’t find meaningful work again?

What parts of my life can AI never touch?

Some of these questions may seem far-fetched. However, I’m certain that individual people are already wrestling with every one of these questions today.

A few specific thoughts

As I said earlier, I don’t think anyone can claim to have clear answers to all these questions. But having listed them, I’ll share some initial thoughts.

For evaluating my ongoing professional work, I try not to get too caught up in the question “Is this really AGI?” It’s a fascinating topic and I’m happy to discuss it, but it’s also been helpful to take a very practical perspective. The key thing I’m seeing is that GPT is already helping people work more efficiently and effectively across a broad range of professions. It’s not a “glorified code completion” tool. Practically, we need to recognize it’s already changing the way people work. If you wait until it hits your personal or professional circle, you’ll be stuck reacting to new workflows and professional situations. I’d much rather anticipate changes, and make proactive decisions about my own work and the work that others are doing.

Practically, we need to recognize that AI tools are already changing the way people work. If you wait until it hits your personal or professional circle, you’ll be stuck reacting to new workflows and professional situations.

I think it’s always going to be beneficial to learn about code. The more you know about programming, the better off you’ll be. But it’s not going to be as necessary, and it’s not going to be sufficient. If you can make a business happen with no specific coding experience while an AI generates all the code you need, it may be in your best interest to run with that and not worry about what other programmers think. On the other hand if the code you’re capable of writing can easily be generated by an AI tool, your skills are going to be much less in demand. This is an interesting place to be, and there’s a lot of people in that position right now. There are many roads forward, but I’ll save a full discussion of this for a later post.

As you start to use AI tools, please be careful about what you dump into these systems. You need to give them context in order to get meaningful output and to be more productive. But, many people are nonchalantly dumping sensitive, proprietary code, data, and information into AI tools that they wouldn’t think of sharing anywhere else online. I don’t mention Hacker News often, but here’s a really eye-opening thread if you haven’t been paying attention to this issue. People are in a hard spot—they’re feeling like they have to use AI to keep up, but using AI is likely a security nightmare. We’re almost certainly going to see some data leak fiascos of a different nature than what we’ve previously seen, and I sure wouldn’t want to be at the center of these incidents.

I’m trying to learn what I can about how these tools work, to the point where I can evaluate the claims that are being made about them. I’m starting to use them myself, to develop my own firsthand experience with how well they work, and how to use them effectively.1 I’m listening to others’ thoughts, both technical and nontechnical people. It’s a great time to go have a cup of coffee with a friend who majored in philosophy.

I’m also making time to enjoy the things I know AI will never touch. I recently started learning piano, and I’ve been making sure to run and hike on a regular basis. I’ve enjoyed face to face conversations a lot more recently, knowing that none of the interaction is being mediated by an AI. Making time for these non-technical experiences helps me continue to keep AI advances in perspective.

Conclusions

No one has concrete answers to the kinds of questions raised here, because the ground is shifting under everyone’s feet. But asking the right questions can guide our thinking, and bring us to a more grounded place as humans, and as programmers. I hope this set of questions and initial thoughts helps some people start to make sense of the rapid changes we’re all seeing, and about to see.

Please share your thoughts. I’d love to know what you’re seeing, what you’re wondering, what you’re doing, and how you’re being impacted by the rapid adoption of AI tools in our community. Anyone is welcome to leave a comment. If you’re a paid subscriber, feel free to join the chat. And if you’re not comfortable sharing your thoughts publicly, please feel free to reply directly to this email.

Thank you, and I wish you well.

I am not using GPT to write any of the text on Mostly Python, and have no intention of ever doing so. If I use it in generating code, I’m calling that out explicitly for the time being. ↩